In this post, I’ll go over the process how to Migrate a VMware App Volumes SQL Database to a new server (or location), and also go over the reasons why you may want to do this.

VMware App Volumes stores all of it’s configuration data inside of a Microsoft SQL Database. This database is used and shared by all the App Volumes Managers in an environment.

Please make sure before any modification of your deployment that you have the proper backups in place.

Why move the database?

There’s a number of reasons why you may want to move your VMware App Volumes SQL Database. These include (but are not limited to):

- Migrating from Standard SQL Server Deployment to a highly available Microsoft SQL Always On Availability Group

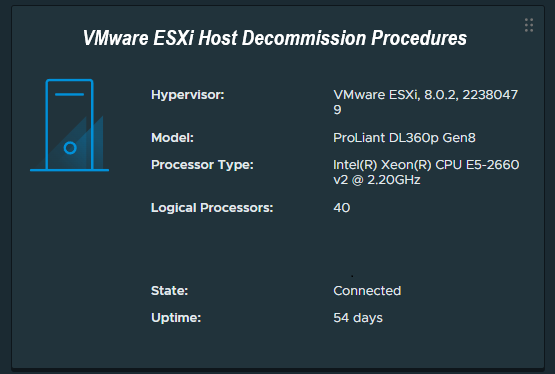

- Deploying a new Microsoft SQL Server and decommissioning your old SQL Server

In any case, we need the flexibility and ability to be able to move and migrate the SQL database to a new server and/or location.

Considerations

When moving the VMware App Volumes SQL Database, you’ll need to shut down all of your VMware App Volumes Manager Servers.

Note, that while this may result in the inability to attach App Volume VMDKs to new VDI sessions, if your environment is properly configured, you shouldn’t have any interruption of App Volume Apps already attached to existing sessions. If you’re in a zero-downtime environment, make sure any users that may require apps, logon, and attach the apps before starting your migration and maintenance.

ODBC Configuration will be updated/changed during this process.

Always make a backup of your App Volumes Manager servers and SQL database before making any changes.

Migrating the App Volumes Database to a new SQL Server

To migrate the database, we’ll need to essentially shutdown all the App Volumes Services, migrate the database, modify a configuration file, and then bring up 1 (one) single App Volumes Manager server, confirm everything is working, and then update and bring online any additional App Volume Manager Servers.

Perform the following steps to migrate the database:

- Perform Backups

- Snapshot App Volumes Manager Servers

- Backup SQL Database

- Backup the “database.yml” file in C:\Program Files (x86)\CloudVolumes\Manager\config

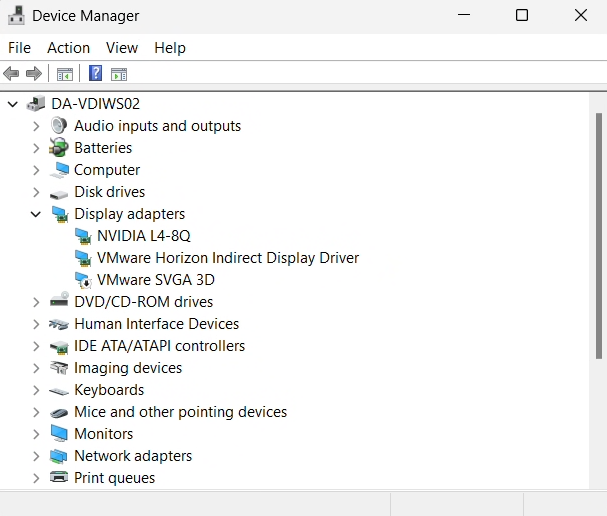

- RDP or Console Access all VMware App Volumes Manager Servers

- Stop all the App Volumes Services on ALL App Volumes Manager Servers

- Migrate SQL Database to a new Microsoft SQL Server (Standard deployment, or High Availability SQL Always-On)

- Update your ODBC Configuration on ALL your App Volumes Manager Servers

- Open “ODBC Data Source Administrator (64-Bit)” from the Windows Control Panel. Identify your App Volumes ODBC Connection, after selecting it, click on “Configure”. Walk through the wizard and update it to the new location of the SQL Database and server. Make sure you test and confirm the connection is working.

- Open “ODBC Data Source Administrator (32-Bit)” from the Windows Control Panel. Identify your App Volumes ODBC Connection, after selecting it, click on “Configure”. Walk through the wizard and update it to the new location of the SQL Database and server. Make sure you test and confirm the connection is working.

- If you’re using SQL Authentication, you’ll need update your database.yml file. You’ll need to do this on all your App Volumes Manager Servers if you’re using SQL authentication.

- Open C:\Program Files (x86)\CloudVolumes\Manager\config\database.yml

- Under “production:” add and/or modify the following two entries:

- username: <SQL Username>

- password: <SQL password>

- Replace both <SQL Username> and <SQL password> with your App Volumes SQL service account that the App Volumes Manager is using to access the SQB database. Please note: After starting the services, the password will be removed from the configuration file.

- You can now start the App Volumes Manager services on ONE of your App Volumes Managers. Please make sure you only start only one as this will allow you to test the configuration, and it will also perform a discovery on the environment to determine active sessions, and update the database.

- Monitor the logs, and activity. You’ll want to confirm that everything is working.

- After you have confirmed the success of the migration and functionality of one of the App Volumes Servers, and after the activity of that server has become idle, you can now start the services on your other App Volumes Managers.

You have now successfully migrated your App Volumes SQL DB to a new server.